GPU offloads LM Studio refer to transferring machine learning tasks, like running large language models (LLMs), from the CPU to the GPU to improve processing speed. LM Studio supports this by leveraging NVIDIA GPUs or AMD GPUs.

This article will show you how to run large language models on your computer using LM Studio. LM Studio works with macOS, Linux, and Windows. It’s easy to use and supports open-source models.

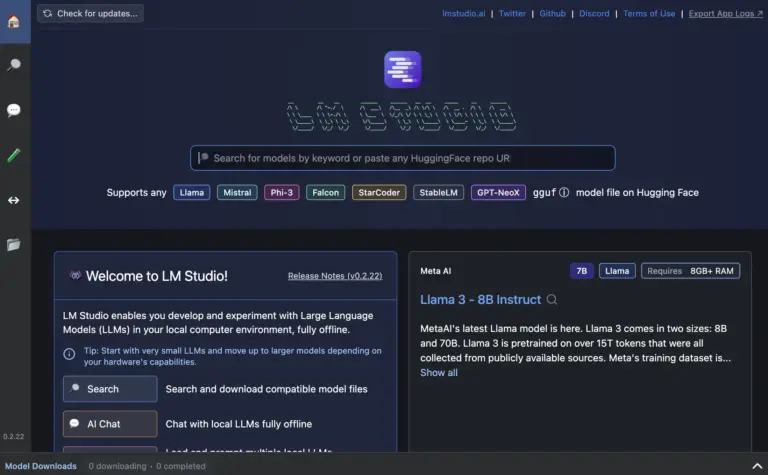

What is LM Studio?

LM Studio is a program that helps you run large language models on your computer. It works on macOS, Linux, and Windows. You can use it to process open-source models and tasks like text generation.

It supports both NVIDIA and AMD GPUs, making it faster for running machine learning models.

What You Can Do with LM Studio?

With LM Studio, you can run large language models on your computer to perform tasks like text generation, answering questions, and language processing. It works with both NVIDIA and AMD graphics cards, making it quicker and more effective for tasks in machine learning.

LM Studio works with open-source models and is compatible with macOS, Linux, and Windows.

What are the minimum hardware/software requirements?

The minimum hardware requirements for LM Studio include a computer with at least 8 GB of RAM and a modern processor. For better performance, it’s recommended to have an NVIDIA or AMD GPU.

Software-wise, LM Studio works with macOS, Linux, and Windows, and it requires CUDA or ROC for GPU support.

Read Also: Games Only Work If GPU Underclocked – A Comprehensive Guide!

Installing LM Studio on Windows

To install LM Studio on Windows, first get the installer from the official website. Run the setup file and follow the instructions to complete the installation. Make sure your computer meets the minimum hardware requirements, like 8 GB of RAM.

For better performance, use an NVIDIA or AMD GPU with proper drivers installed, such as CUDA for NVIDIA GPUs.

Running LM Studio

To run LM Studio, open the program after installation. Choose a model or upload your large language model (LLM). The software will use your computer’s CPU or GPU (if available) to process tasks like text generation.

For better performance, make sure your system meets the hardware requirements, like having an NVIDIA or AMD GPU.

Chat with your model

With LM Studio, you can chat with your model by selecting or uploading a language model. Once the model is loaded, type your questions or prompts, and the model will generate responses.

This lets you interact with the AI for tasks like answering questions or creating text, similar to a chatbot experience.

Chat with your documents

With LM Studio, you can chat with your documents by uploading them into the program. Once the documents are loaded, you can ask questions about their content.

The model will analyze the text and provide answers or summaries, making it easier to find information or understand the documents better. This feature helps you interact with your files effectively.

OpenAI-like Structured Output API

The OpenAI-like Structured Output API in LM Studio allows users to receive answers in a clear format. When you ask questions, the model can give responses with organized data, making it easier to read and understand.

This feature is useful for developers and users who want precise information in a structured way, improving interaction with the AI. For more information, you can look at the official instructions.

Read Also: How Hot Is Too Hot For GPU – Keep Your GPU Cool!

Automatic load parameters, but also full customizability

LM Studio offers automatic load parameters for easy setup while allowing full customizability for advanced users. This means you can quickly start using models with default settings but also adjust options to fit your needs.

You can change various parameters like model size and performance settings, giving you flexibility to tailor the experience according to your requirements. For detailed guidance, visit the official documentation.

Serve on the network

LM Studio can serve on the network, allowing users to access language models over the internet. This feature enables multiple users to interact with the models from different devices, making collaboration easy.

By hosting the models on a server, you can use LM Studio for various applications, such as chatbots or document analysis, without needing to install software locally.

Folders to organize chats

You can create folders to organize your chats. This feature helps you manage different conversations and topics easily. By sorting chats into specific folders, you can quickly find and reference previous discussions.

This organization improves your workflow and makes it simpler to navigate through various projects or questions. For more details on this feature, you can check the official documentation.

Multiple generations for each chat

In LM Studio, you can generate multiple responses for each chat. This feature allows you to explore different answers and ideas for your questions. By getting several options, you can choose the best response or mix and match parts from different generations.

This flexibility helps improve your understanding and enhances the quality of interactions with the language model.

Read Also: Fix Memory Leak GPU – The Ultimate Guide In 2024!

How to migrate your chats from LM Studio?

First, look for an export option in the software settings. This feature allows you to save your chat history in a file format, like JSON or TXT.

Once you have the file, you can import it into another tool or store it for future reference. For detailed steps, you can look at the official instructions for LM Studio.

How to Install and Use LM Studio?

Download the installer from the official website and open it on your computer. Follow the steps shown on the screen to finish the installation.

After installing, open the program and choose a language model to start using it. You can type in your questions or tasks, and the model will generate responses.

Frequently Asked Questions:

1. Can LM studio use GPU?

Yes, LM Studio can use GPUs to improve performance. It supports NVIDIA and AMD GPUs, allowing faster processing for large language models. This feature helps in handling more complex tasks quickly, making it efficient for various applications.

2. Does LM Studio collect data?

LM Studio may collect data to improve its services, but the specific details depend on its privacy policy. Users should review this policy to understand what data is collected and how it is used. Always ensure your privacy is protected when using the software.

3. What is GPU in Lightroom?

In Lightroom, a GPU (Graphics Processing Unit) helps speed up image editing tasks by handling graphics and rendering more efficiently. Using a GPU allows for faster performance, smoother zooming, and quicker previews, especially when working with large photos.

4. Does LM Studio have an API?

Yes, LM Studio has an API that allows developers to integrate its features into their applications. This API enables users to interact with language models programmatically, making it easier to automate tasks and customize functionalities.

5. What is GPU offload LM studio gaming?

GPU offload in LM Studio gaming means using the Graphics Processing Unit to handle game tasks instead of the CPU. This improves performance and speeds up graphics rendering, allowing for smoother gameplay and better graphics quality, especially in demanding games.

Conclusion

In conclusion, LM Studio is a powerful tool for running large language models on various operating systems, utilizing both NVIDIA and AMD GPUs for enhanced performance. It offers features like document interaction, chat organization, and flexible settings. This makes it an excellent choice for users looking to explore AI capabilities effectively.